Open-source video generation has reached a point where anyone with a decent PC can create short AI videos right at home. CogVideoX-2B stands out as one of the most accessible options in this space.

Developed by THUDM, this 2-billion-parameter model delivers solid text-to-video results while running on consumer hardware.

Many creators now prefer it as their starting point for local setups because it balances quality, speed, and lower resource needs compared to larger models.

This guide walks through everything needed to get CogVideoX-2B working smoothly on a personal computer.

It covers hardware checks, two installation methods, model downloads, first video generation, image-to-video workflows, and fixes for common problems.

Whether the goal is quick experiments or building a reliable local pipeline, these steps provide a clear path forward.

Why CogVideoX-2B Works Well for Local Setups

CogVideoX-2B generates videos up to 6 seconds long at 720×480 resolution and 8 frames per second. The smaller size compared to 5B variants makes it practical for mid-range GPUs.

It handles motion, scenes, and basic prompt instructions effectively, especially after good prompt engineering.

The model comes with full commercial freedom thanks to its licensing. This Apache 2.0 license allows users to run it for personal projects, client work, or commercial videos without extra fees or restrictions.

No watermarks appear on outputs, and everything stays private on the local machine. This freedom removes many barriers that cloud services impose.

System Hardware Requirements

Before installation, check whether the current setup can handle the workload. Real-world performance depends heavily on GPU VRAM.

Minimum Requirements

- GPU: NVIDIA GTX 1080 Ti (11GB VRAM) or equivalent with at least 12GB VRAM

- RAM: 16GB system memory

- Storage: 50GB free space (models and dependencies take room)

- OS: Windows 10/11 or Linux (Ubuntu recommended)

Recommended Setup

- GPU: RTX 3060 12GB, RTX 4070, or higher (RTX 40-series performs best)

- VRAM: 16GB+ for comfortable FP16 operation; 24GB+ for smoother experience

- RAM: 32GB+

- CUDA Toolkit: Version 12.1 or 12.4 (match with PyTorch)

For FP16 inference, expect around 18GB peak usage during decoding. Quantized INT8 or FP8 versions drop this to 8-12GB, making lower-end cards viable with some speed trade-offs.

Always install the latest NVIDIA drivers and verify CUDA compatibility. Python 3.10 or 3.11 works best, along with Git for cloning repositories.

Method 1: The Easiest One-Click Installation Using Pinokio and CogStudio

Many users want to avoid command lines entirely. Pinokio simplifies this by handling environments, dependencies, and updates automatically.

Start by visiting the official Pinokio website and downloading the version for your operating system. Run the installer and complete the setup.

Once open, search for “CogStudio” or “CogVideo” inside the app browser. Click Install the tool pulls everything needed, including ComfyUI wrappers and model support.

After installation, launch the app. It often includes built-in CPU offloading options that help cards with as little as 4-8GB VRAM. Adjust settings in the interface for lower memory usage.

Generate the first test video directly from the built-in workflow. This method suits beginners who want fast results without deep technical configuration.

Pinokio also manages updates. When newer versions or fixes release, the app notifies and applies them with one click. This keeps the setup current without manual intervention.

Method 2: Comprehensive ComfyUI Setup (Recommended for Advanced Control)

ComfyUI offers more flexibility and customization for serious users. This method takes longer but delivers better long-term results.

First, install base ComfyUI. Use the official GitHub repository or Pinokio for the core. Once running, go to the Custom Nodes Manager. Search for “ComfyUI-CogVideoXWrapper” by kijai and install it. Restart ComfyUI after installation.

Next, install acceleration backends for better speed:

- onediff

- onediffx

- nexfort

Run these through the manager or terminal commands as shown in the wrapper documentation. These engines optimize inference and reduce generation times significantly.

This setup allows node-based workflows. Users can build, save, and reuse complex pipelines for different video styles or batch processing.

Downloading and Placing the Right Model Weights

Head to Hugging Face and locate the official CogVideoX-2B repository from THUDM or mirrored versions. Download these key files:

- Transformer model (main weights)

- VAE checkpoint

- Quantized T5-XXL FP8 text encoder (strongly recommended to save memory)

Avoid full-precision text encoders unless the GPU has 24GB+ VRAM. Place files in the correct ComfyUI folders:

- Models go into

ComfyUI/models/checkpoints/or the CogVideoX-specific subfolder - VAEs into

ComfyUI/models/vae/ - Text encoders into the appropriate embeddings or text encoder directory

Keep a clean folder structure. Create a dedicated “CogVideoX” folder inside custom nodes or models to avoid mix-ups. Double-check file names and paths — mismatches cause loading errors.

Running the First Text-to-Video Generation

Load a default workflow in ComfyUI for CogVideoX-2B. Connect the model loader nodes to the sampler.

Key parameters to adjust:

- Frames: Use multiples like 16N + 1 (example: 49 frames for ~6 seconds at 8fps)

- Steps: Start with 30-50 for quality vs speed balance

- CFG Scale: 6-8 works for most prompts

- Seed: Fix a number for reproducible tests, then randomize for variations

Write a detailed prompt describing action, camera movement, lighting, and style. Generate the clip and preview it. First attempts might look rough, but adjustments improve results quickly. Expect 30-90 seconds per short video on a 12-16GB card.

Mastering Prompt Sensitivity

CogVideoX-2B responds best to very descriptive, structured prompts. Simple sentences often produce weak or random motion. Break prompts into clear elements: subject, action, environment, camera, mood, and style.

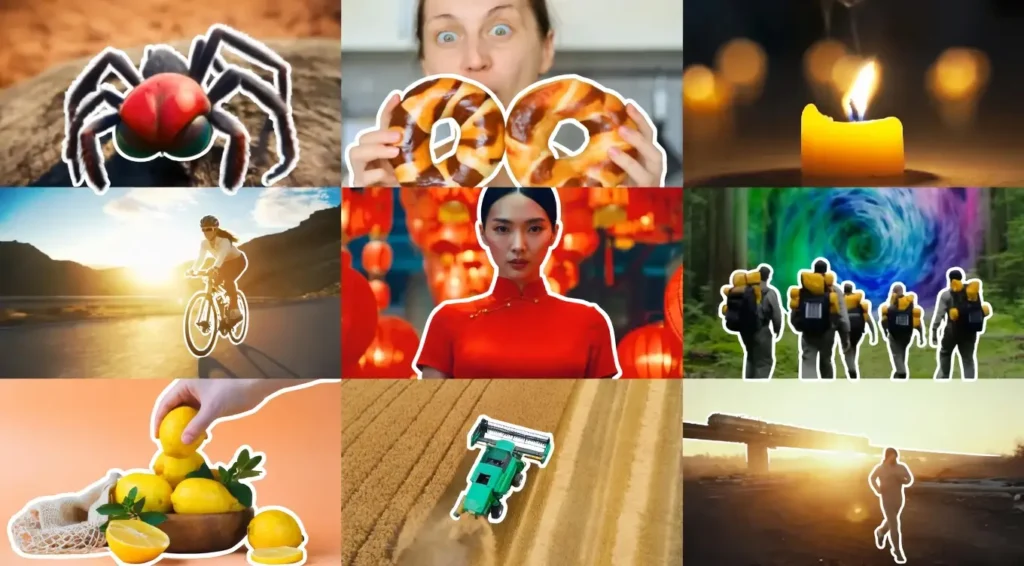

Use tools like ChatGPT or Gemini to expand basic ideas into rich descriptions. Example structure: “A young woman in a red dress walks slowly through a bustling night market in Tokyo, neon lights reflecting on wet streets, cinematic camera pan from left to right, warm color grading, highly detailed, realistic motion.”

Test variations and note which phrases trigger better coherence. Negative prompts help avoid unwanted artifacts like blurring or distortions.

Image-to-Video Workflow

CogVideoX supports image-to-video mode for greater control. Upload a starting image and combine it with a motion prompt.

In ComfyUI, load the I2V variant or appropriate node. The model respects the source image structure while adding movement. Adjust strength parameters to control how closely the video sticks to the original frame.

This works well for animating still artwork, product visuals, or character performances. Maintain aspect ratio consistency between input image and output settings for best results.

Troubleshooting Common Errors

Torch not compiled with CUDA enabled

Reinstall PyTorch with the correct CUDA version matching the installed toolkit. Use official PyTorch commands for the current setup.

CUDA Out of Memory (OOM)

- Enable model CPU offload or sequential offload

- Use FP8/INT8 quantized models

- Lower resolution or frame count

- Install xFormers or flash-attention for memory efficiency

- Close other applications using GPU memory

Black or corrupted video output

Check sampler settings and frame counts. Try different seeds. Disable certain attention optimizations like SageAttention during initial tests. Re-download model files if corruption persists. Ensure output paths have write permissions.

Slow generation

Verify Torch and CUDA versions match. Use acceleration backends like onediff. Reduce steps or enable tiling/slicing in VAE settings.

Keep a log of errors and solutions. Most issues trace back to memory, paths, or version mismatches.

Additional Tips for Better Results

Experiment with different seeds and CFG values. Batch smaller generations and combine them later in video editors. Store successful workflows for reuse. Monitor GPU temperatures during long sessions. Update drivers and dependencies regularly for stability.

Many users combine CogVideoX-2B with upscaling tools or frame interpolation for smoother final videos. The local setup allows unlimited generations once configured, making it cost-effective over time.

This complete installation opens doors to creative experimentation without monthly fees or privacy concerns. Start simple, then build more advanced pipelines as comfort grows.

FAQs

Can CogVideoX-2B run on an 8GB VRAM GPU?

Yes, with heavy quantization and CPU offloading, though generation will be slower. Results remain usable for testing.

Is the Apache 2.0 license truly free for commercial projects?

Yes, it allows full commercial use, modification, and distribution with proper attribution where required.

How long does a typical 6-second video take to generate?

On a RTX 4070, expect 30-90 seconds. Older cards may take 2-5 minutes depending on settings.

Do I need internet after installation?

No, once models download, everything runs offline.

What is the best way to improve video quality?

Use highly detailed prompts, proper frame counts, and post-process outputs in tools like CapCut or DaVinci Resolve.

Can I generate longer videos than 6 seconds?

Native limit sits around 6 seconds, but users extend clips by generating segments and stitching them together.