AI video generation has reached a point where open-source models deliver results that rival paid services.

Two strong contenders stand out for creators focused on realism: Mochi 1 from Genmo and CogVideoX from Zhipu AI.

Both push boundaries in different ways, but one question remains central for most users which one produces more believable, lifelike videos?

This detailed comparison examines exactly where each model excels and falls short when realism matters most.

From fine skin textures and natural lighting to convincing physics and human movement, the analysis covers practical performance in 2026 workflows.

By the end, users will know which model fits specific project needs, whether running locally or experimenting with prompts.

Visual Fidelity: Detail, Textures, and Lighting

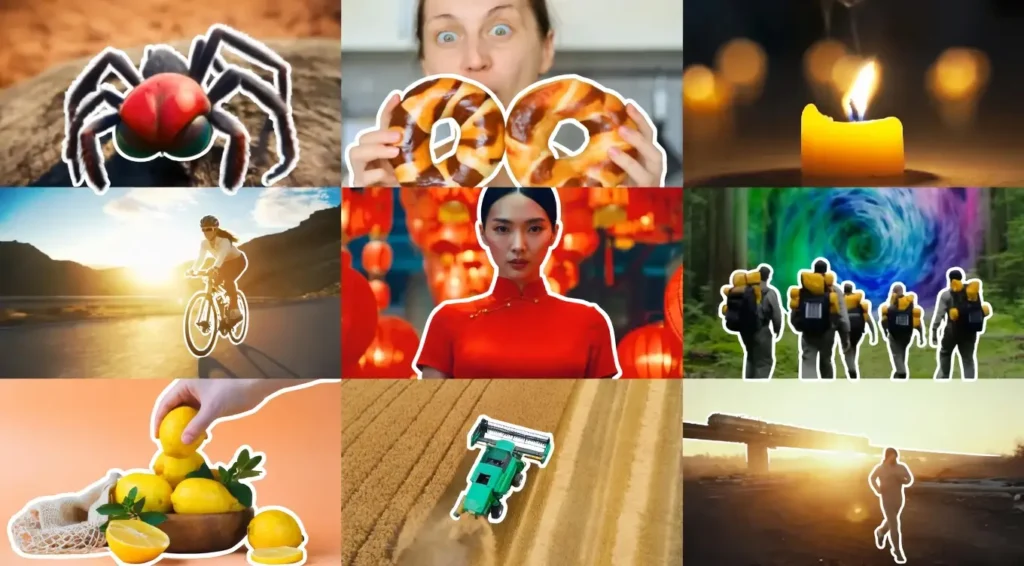

Visual quality forms the foundation of perceived realism. CogVideoX often leads here, especially in its 5B parameter version. It generates sharp facial details, realistic skin pores, fabric weaves, and material reflections that feel close to photographed footage.

Lighting behaves naturally soft shadows fall correctly, and highlights catch on surfaces with convincing intensity.

Close-up portraits and indoor scenes benefit the most, with accurate color grading that avoids the plastic or overly saturated look common in earlier models.

Mochi 1, while strong in motion, currently operates mainly at 480p resolution in its preview release. This limits fine detail capture. Textures appear softer, and small elements like individual hairs or fabric threads lose definition.

Lighting works well in broad scenes but can flatten in complex setups with multiple light sources. The model prioritizes overall scene coherence over microscopic realism, which shows in side-by-side tests where CogVideoX renders more lifelike skin and environmental textures.

For projects needing photorealistic still-like frames within video, CogVideoX holds a clear edge. Creators who start with high-quality reference images see even better results through its image-to-video capabilities.

Motion Realism: Physics, Gravity, and Fluidity

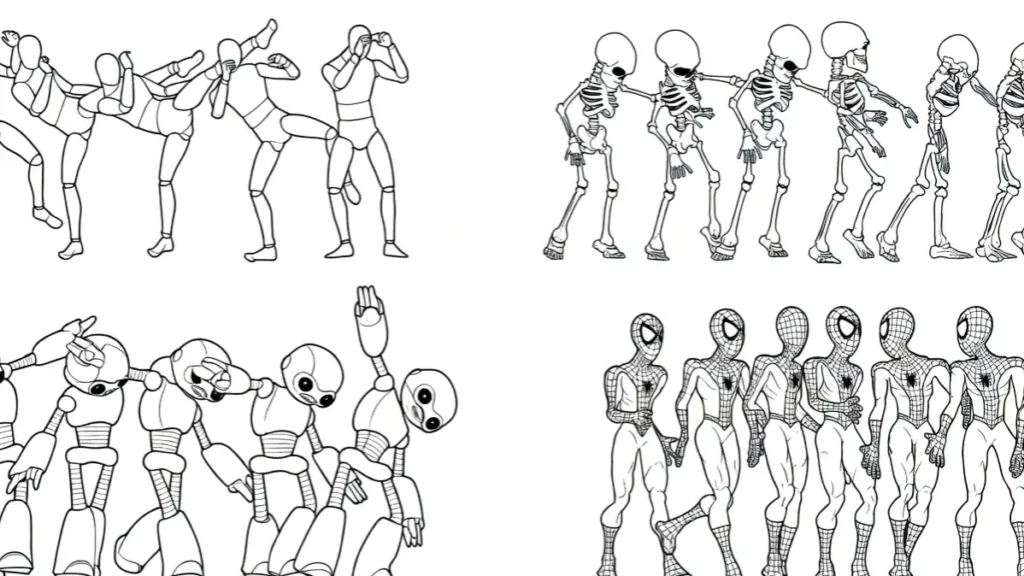

Motion separates good AI video from truly immersive content. Here, Mochi 1 stands out as the stronger performer. The 10B parameter model excels at natural character movement, weight transfer, and environmental interactions.

Hair flows realistically with wind or head turns. Clothing drapes and wrinkles according to body motion. Liquids splash and settle with believable physics, while rigid objects collide with proper momentum.

Tests with running figures, dancing sequences, or falling objects show Mochi 1 maintaining temporal consistency far better than many competitors. Movements feel grounded rather than floating, addressing a common weakness in text-to-video generation.

CogVideoX produces smoother basic transitions but sometimes exhibits floaty or weightless motion, especially with human limbs or fast actions.

Gravity effects appear less convincing, and complex interactions like hand-object contact can break down. However, it handles slower, deliberate movements well, particularly when guided by strong reference images.

For dynamic action or anything involving natural world physics, Mochi 1 delivers more convincing results. CogVideoX works better for static or gently moving scenes where detail matters more than energetic motion.

Text-to-Video vs. Image-to-Video Realism

A major practical difference appears in how each model handles starting inputs. CogVideoX shines when users provide a realistic starting image.

Its image-to-video mode transfers details effectively, maintaining facial identity, clothing, and background elements while adding motion.

This workflow gives creators more control and higher starting quality, bridging the gap toward professional results.

Mochi 1 focuses primarily on pure text-to-video generation. It interprets detailed prompts effectively and creates consistent scenes from scratch, but without a strong visual reference, fine details and exact character likeness prove harder to lock in.

The model performs admirably for conceptual or stylized realism but requires more prompt engineering to match the precision of image-guided workflows.

If a project begins with existing photos, concept art, or generated stills, CogVideoX offers a clearer path to realistic video. For completely original ideas described only in text, Mochi 1 provides competitive results with its strong prompt understanding.

Prompting for Realism: Two Different Languages

Effective prompting differs significantly between the models. Mochi 1 responds best to technical, cinematography-focused language.

Prompts that specify camera angles, lens types, lighting setups, and movement descriptions yield stronger outputs. Terms like “smooth tracking shot at eye level,” “subtle depth of field,” or “natural weight shift during walk” guide the model toward realistic behavior. Detailed scene composition and physics instructions also help.

CogVideoX favors rich, descriptive, adjective-heavy prompts. Strong style references, emotional tones, and material details work well: “photorealistic close-up of a woman with freckles and soft window lighting, gentle breeze moving her hair.

It locks onto visual styles effectively when users describe mood, atmosphere, and specific references.

Mastering both approaches takes practice. Many creators keep separate prompt templates for each model. Hybrid workflows generating a base with Mochi 1 then refining motion or details through CogVideoX pipelines often produce the most realistic final clips.

Getting 4K Realism from 480p Outputs

Resolution remains a current limitation for Mochi 1, which outputs at 480p in its initial release. Achieving higher quality requires external upscaling tools.

Popular chains include running outputs through Topaz Video AI, Magnific, or other enhancers followed by slight reprocessing. This workflow adds steps but can elevate results significantly when combined with Mochi’s excellent motion foundation.

CogVideoX starts with better base resolutions in many implementations (up to 720p or higher in optimized versions) and maintains more detail during generation.

Its outputs need less aggressive upscaling, preserving realism better through the enhancement process. The 5B model particularly benefits from this head start, producing cleaner intermediates for post-processing.

For users targeting final 4K delivery, CogVideoX reduces workflow friction. Mochi 1 users must invest more time in post-production but gain advantages in raw motion quality that upscalers can enhance.

Hardware Optimization: Realism on a Budget

Running these models locally demands attention to hardware. Mochi 1 requires substantial resources — often 24GB VRAM minimum for comfortable operation, with full precision needing 40-60GB depending on implementation.

Optimizations in ComfyUI and quantized versions bring it down to 20-24GB on single GPUs like an RTX 4090, though generation speed slows. Multi-GPU setups help but increase complexity.

CogVideoX proves more accessible. The 5B and smaller variants run well on 16GB VRAM cards with good speed, making them practical for a wider range of users. Quantization and efficient inference pipelines allow decent 720p outputs on consumer hardware without excessive waiting times.

For budget-conscious creators, CogVideoX offers easier entry to realistic video generation. Mochi 1 rewards users with stronger GPUs or cloud rental access, where its motion strengths become more practical.

Use Case Matcher: Which Model for Your Project?

Different projects favor different strengths.

Best for Action/Sports: Mochi 1 wins due to superior physics and fluid motion. Running athletes, dance sequences, or sports highlights maintain natural flow and body mechanics that feel authentic.

Best for Portraits/Close-ups: CogVideoX performs better here. Detailed faces, subtle expressions, skin textures, and eye movements look more lifelike, especially with image references.

Best for Long-form Narrative: CogVideoX edges ahead with better temporal stability over multiple clips and stronger detail retention. It supports more consistent character appearance across scenes.

Other scenarios include product demonstrations (favoring CogVideoX for clean visuals) and dynamic environmental shots (favoring Mochi 1 for realistic interactions). Many advanced users combine both — using Mochi for motion-heavy segments and CogVideoX for dialogue or close-up storytelling.

Common Issues: Artifacts, Warping, and Morphing

Both models still show typical AI video limitations. Mochi 1 can produce warping during extreme movements or complex multi-subject interactions.

Hands and fingers occasionally distort in fast action, though physics simulation helps overall coherence. Background elements sometimes shift unnaturally when camera motion intensifies.

CogVideoX struggles more with floaty limbs and inconsistent lighting across frames in dynamic scenes. Facial morphing appears in longer sequences without strong references. It handles static or slow scenes cleanly but can lose realism when prompts demand rapid changes.

Mitigation strategies work for both. Shorter clip generation followed by careful extension, strong negative prompts, and reference images reduce artifacts. Community fine-tunes and LoRAs continue improving edge cases for both models.

FAQs

Which model produces more realistic videos overall?

It depends on the use case. Mochi 1 leads in natural motion and physics, while CogVideoX excels in visual detail, textures, and image-guided realism. Many creators use both depending on the shot.

Can these models run on consumer GPUs?

CogVideoX runs more easily on 16GB+ VRAM cards. Mochi 1 needs stronger optimization or 24GB+ for practical use, though quantized versions help.

How long are the generated clips?

Both typically produce 4-10 second clips natively. Longer videos require extension techniques or stitching multiple generations.

Do they support commercial use?

Yes, both are open-source with licenses allowing commercial applications, though users should check specific terms for each implementation.

Which is better for beginners?

CogVideoX offers a gentler learning curve with image-to-video support and lower hardware demands. Mochi 1 rewards more technical prompt crafting and hardware investment.

How do they compare to closed-source tools like Kling or Runway?

Both close the gap significantly in 2026. Mochi 1 rivals them in motion realism, while CogVideoX competes well in detail when using proper workflows. Open-source flexibility provides advantages in customization and cost for heavy users.

This comparison shows no single winner realism depends on matching the right tool to the specific creative need. Testing both models with project-specific prompts remains the best way to decide. The open-source video generation space continues advancing quickly, with regular updates improving strengths and reducing current weaknesses for both Mochi 1 and CogVideoX.