The CogVideoX series from THUDM offers two main open-source text-to-video models that run locally. The 2B version targets accessibility on modest hardware, while the 5B version aims for higher visual fidelity and better prompt following.

This comparison examines the real differences between these models across quality, speed, hardware demands, and practical use cases.

Many creators wonder whether the larger model delivers enough improvement to justify extra resources. The sections below break down the specifics.

The Evolution of the CogVideoX Ecosystem

CogVideoX models build on earlier versions with a focus on better temporal consistency and prompt alignment. The 2B model serves as a lightweight entry point suitable for testing and lower-end setups.

The 5B model scales up parameters for more detailed outputs and refined motion handling. Both support text-to-video and image-to-video workflows through Hugging Face Diffusers and ComfyUI integrations.

The key distinction lies in how parameter scaling affects output. Larger models capture finer details and complex scenes more reliably, but they require more memory and processing time.

This review looks at whether those gains translate into noticeable benefits for everyday video generation tasks.

Core Architecture Differences: Under the Hood

The jump from 2 billion to 5 billion parameters brings meaningful changes in model capacity. The 5B version processes information with greater depth, leading to improved handling of intricate prompts and visual elements.

Text encoder capabilities also differ. The 5B architecture supports stronger dual-language processing, particularly for English and Chinese prompts, resulting in more accurate interpretation of nuanced instructions.

This gives the larger model an edge in multilingual or culturally specific content.

Overall, the 5B model uses a more robust diffusion transformer backbone. This leads to better fusion of temporal and spatial information during generation.

The 2B model prioritizes efficiency, which sometimes limits its ability to maintain coherence in longer or more dynamic sequences.

Visual Quality Showdown: Photorealism and Text Rendering

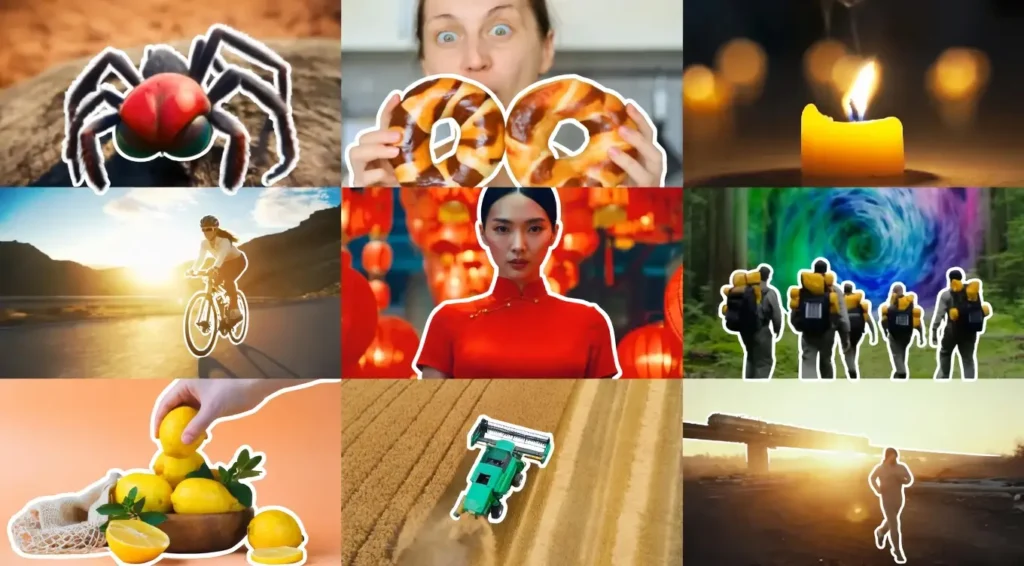

Resolution marks one clear area of separation. The 2B model operates comfortably at 480p baseline, producing acceptable results for quick prototypes. The 5B model scales more naturally to 720p outputs with sharper details and better color fidelity.

Text rendering inside videos shows another gap. The 5B model renders legible words and phrases with fewer distortions, especially in dynamic scenes where text appears on signs, clothing, or overlays.

The 2B version often struggles here, producing garbled or incomplete characters when prompts demand on-screen text.

Photorealism favors the 5B model in most tests. Human faces, textures, and environmental details appear more convincing. Lighting and shadows maintain consistency better across frames.

The 2B model delivers usable results but tends toward softer, less defined visuals in complex compositions.

Prompt Compliance and Spatial Awareness

Multi-subject prompts highlight the 5B model’s advantage. It follows instructions for foreground and background placement more reliably, keeping multiple elements correctly positioned without unwanted blending.

The 2B model can handle simple scenes but often merges subjects or ignores spatial cues in crowded prompts.

Camera movement simulation also improves with the larger model. Pans, zooms, and simulated drone shots exhibit smoother trajectories and fewer abrupt shifts.

The 5B version respects directional instructions more accurately, creating cinematic motion that feels intentional. The 2B model produces basic movements but sometimes introduces jitter or inconsistent pacing.

Motion Dynamics Battle: Temporal Consistency vs. Warping

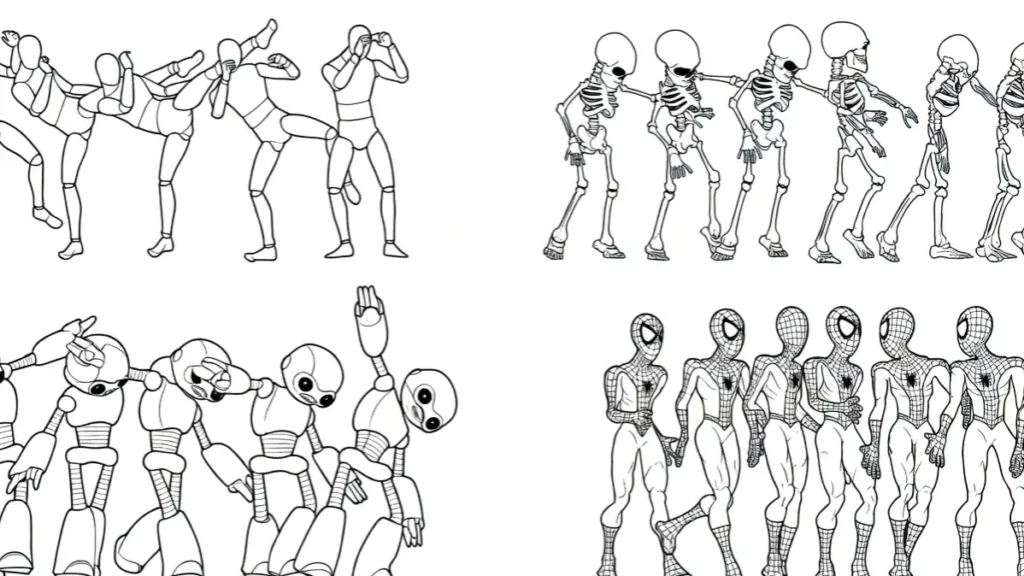

Fluidity separates the two models clearly in action sequences. The 5B model handles fast-moving subjects and object physics with greater stability. Human actions like walking, gesturing, or sports movements retain natural flow across frames.

The 2B model shows more warping and morphing issues. Facial features can shift unexpectedly between frames, and structural elements like buildings or vehicles may distort during motion.

This becomes especially visible in clips longer than a few seconds.

Temporal consistency favors the 5B model overall. It preserves object integrity and scene coherence even when prompts involve rapid changes. The 2B version works for static or slow-moving content but requires more prompt engineering to minimize artifacts in dynamic videos.

Hardware Reality Check: True VRAM Consumption Statistics

Hardware requirements create the biggest practical divide. The 2B model runs on modest setups with optimizations. Reports show it operating on GPUs as low as 8-12GB VRAM using quantization techniques like FP8 or INT8.

The 5B model demands significantly more resources. Native FP16 usage often requires 16-24GB VRAM for comfortable operation, though aggressive quantization brings it down to around 12GB on some systems. Multi-GPU setups help but add complexity.

Generation speeds vary by hardware. On an RTX 3060-class card, the 2B model produces clips in 2-4 minutes, while the 5B model stretches to 8-12 minutes or longer.

An RTX 4090 reduces these times substantially, with the 5B model generating 6-second clips in roughly 1-3 minutes depending on settings. The 2B version remains notably faster across all tested configurations.

Text-to-Video (T2V) vs. Image-to-Video (I2V) Performance

Both models support text-to-video generation, but the 5B version delivers stronger prompt adherence and visual richness. Background details, lighting, and stylistic elements align more closely with descriptions.

Image-to-video translation shows the 5B model respecting source lighting, perspective, and composition more faithfully. It maintains the original image’s mood while adding plausible motion.

The 2B model produces motion but sometimes alters colors, angles, or key details from the reference image.

For users focused on extending still images into short clips, the 5B model provides more reliable and higher-quality results. The 2B version serves as a lighter alternative for initial experiments.

Software Workflow Integration: Standalone vs. ComfyUI Support

Both models integrate into popular local tools. Standalone Diffusers scripts offer a simple entry point for Python users. ComfyUI provides node-based workflows that appeal to visual creators who prefer drag-and-drop setups.

Model switching requires attention to text encoders. The 5B version benefits from its dedicated encoder for optimal results, though some workflows allow shared components with reduced performance.

Custom nodes in ComfyUI support weight adjustments and quantization options for both models, making experimentation straightforward once the initial setup completes.

Community workflows for low-VRAM usage have proliferated, especially for the 5B model. These include tiling techniques and memory-efficient attention mechanisms that help broader audiences access the larger variant.

Cost vs. Performance Analysis (The ROI Calculation)

The upgrade decision depends heavily on available hardware and production needs. The 2B model offers excellent accessibility.

It runs quickly on consumer cards and delivers solid results for social media clips, prototypes, and learning purposes. Generation costs stay low in terms of time and electricity.

The 5B model justifies the extra resources when output quality directly impacts the final product. Professional creators working on client videos, detailed animations, or content requiring text overlays benefit from the sharper visuals and better consistency.

However, slower speeds and higher hardware demands increase the effective cost per video.

For users with 12GB or less VRAM, the 2B model represents the practical choice. Those with 16GB+ cards can evaluate the 5B model through quantized versions.

Hardware upgrades become relevant only when consistent high-quality output outweighs the investment in time and power.

Final Verdict: Who Should Choose 2B and Who Should Upgrade to 5B?

The 2B model suits beginners, hobbyists, and users with limited hardware. It provides fast iterations, decent quality for casual content, and easy local deployment. Many creators start here to learn prompting and workflow before scaling up.

The 5B model fits intermediate to advanced users who need superior detail, motion quality, and prompt accuracy.

It shines in scenarios demanding professional-looking results, such as marketing videos, storytelling sequences, or content with on-screen text.

The quality jump becomes most apparent in complex or dynamic scenes.

The upgrade proves worthwhile for those already equipped with sufficient VRAM and patience for longer generation times.

Users on entry-level GPUs should stick with the 2B version or explore cloud options for occasional 5B needs. Both models advance local video generation significantly, giving creators powerful open-source tools without relying on paid APIs.

FAQs

What is the main difference between CogVideoX 2B and 5B?

The 5B model has more parameters, leading to better visual detail, prompt adherence, text rendering, and motion consistency compared to the lighter 2B version.

Can the CogVideoX 5B model run on 12GB VRAM?

Yes, with quantization techniques like FP8 or INT8 and optimized workflows in ComfyUI or Diffusers. Performance and speed may decrease compared to higher VRAM setups.

Which model generates videos faster?

The 2B model consistently produces clips faster across all hardware configurations. The 5B model takes longer but delivers higher quality outputs.

Do both models support image-to-video generation?

Yes. The 5B version generally respects the input image better in terms of lighting, perspective, and composition.

Is CogVideoX open source?

Both models are open source under permissive licenses, allowing local use, modification, and commercial applications.

Which model should beginners start with?

The 2B model offers a better starting point due to lower hardware requirements and faster generation times for learning and testing.