The AI video generation space has long been dominated by expensive, closed-source platforms with strict content filters and monthly fees.

Tencent changed the equation by open-sourcing Hunyuan Video, giving creators and developers full access to a powerful model without ongoing subscriptions or heavy restrictions.

This release stands out because it delivers strong visual quality and motion performance while running locally on more accessible hardware through optimizations.

Many in the community see it as a serious step toward democratizing high-end video AI, especially after years of reliance on cloud services with usage limits and censorship risks.

This review covers the full picture: architecture, version differences, real performance, open-source advantages, comparisons, hardware needs, setup workflows, limitations, and practical advice for users considering local deployment.

Core Architecture: What Powers Hunyuan Video?

Hunyuan Video relies on a carefully designed pipeline built for efficiency and quality. At its foundation sits a Causal 3D Variational Autoencoder (3D VAE) that compresses video data spatially and temporally.

This compression reaches ratios of 16× spatially and 4× temporally, shrinking large video inputs into manageable latent representations without losing critical details for reconstruction.

The main generation engine is a Diffusion Transformer (DiT) framework operating in two stages. The first stage creates base videos at 480p to 720p resolutions over 5 to 10 seconds.

A dedicated video super-resolution network then upscales these to 1080p, sharpening details while preserving motion coherence. This staged approach balances speed and fidelity better than single-pass systems that often sacrifice one for the other.

Text understanding comes from a bilingual glyph-aware encoder that handles both Chinese and English prompts effectively. It captures nuanced descriptions, including text rendering inside scenes, which many competing models struggle with.

The architecture also incorporates selective and sliding tile attention (SSTA) mechanisms. These reduce computational load during inference by focusing attention on relevant spatial-temporal regions rather than processing every element uniformly.

The result is a model that maintains strong semantic alignment between prompts and outputs while keeping generation times reasonable on consumer hardware after quantization.

Version Evolution: HunyuanVideo 1.0 vs HunyuanVideo 1.5

The original HunyuanVideo 1.0 used around 13 billion parameters and delivered solid cinematic results but demanded substantial VRAM, often exceeding 40-60GB for comfortable operation. This limited its appeal to users with enterprise-grade setups.

HunyuanVideo 1.5 brings a major efficiency leap with only 8.3 billion parameters. Despite the smaller size, it achieves comparable or better visual quality and motion coherence in many tests.

Key improvements include refined data curation, progressive pre-training and post-training strategies, and the sparse spatio-temporal attention system that accelerates inference significantly.

VRAM requirements dropped noticeably. Version 1.5 runs more smoothly on mid-to-high-end consumer GPUs, especially with FP8 and GGUF quantizations.

Generation times improved, and the model handles both text-to-video and image-to-video tasks within a unified framework.

The inclusion of a built-in super-resolution component further boosts final output sharpness without needing external upscalers in many cases.

Users report that 1.5 feels more practical for everyday experimentation while retaining the strengths that made the first version competitive against closed-source alternatives.

Real-World Performance Review: Visual and Motion Fidelity

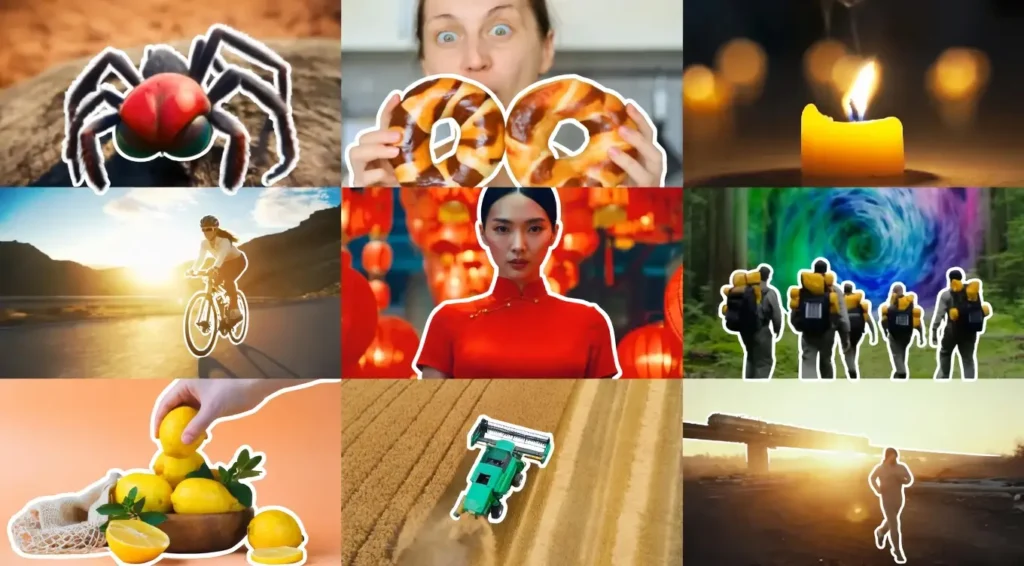

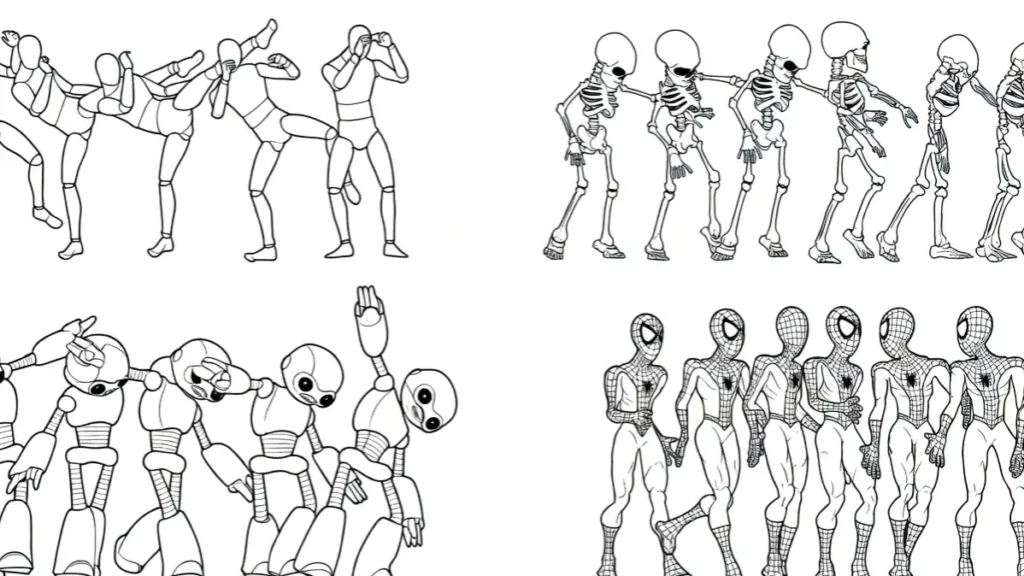

Hunyuan Video performs strongly in text-to-video tasks. It produces clean visuals with good lighting consistency, detail retention, and reliable camera movements such as pans and zooms.

Text rendering inside scenes works better than many open-source peers, and it handles realistic-to-stylized transitions effectively.

Image-to-video generation stands out for frame consistency. Starting from a reference image, the model animates subjects while preserving tone, style, and identity across frames.

Facial expressions and micro-movements come through naturally in simpler scenarios.

Physics and motion fidelity show mixed results. The model excels at human-centric actions and basic interactions but can appear stiff or robotic in complex multi-character scenes, fast action sequences, or animal movements.

Camera work remains stable, supporting cinematic aesthetics for storytelling or promotional content.

Overall scores from community benchmarks place it near the top among open-source options for visual quality, though motion naturalness sometimes trails specialized competitors.

Outputs at 1080p after super-resolution look polished for social media, prototypes, and creative experiments.

Open-Source Freedom: The “Uncensored” Factor

Releasing the full model weights and code gives users complete control. Unlike cloud platforms that enforce strict safety filters, local runs of Hunyuan Video allow broader creative freedom.

This appeals to artists, researchers, and developers exploring edge cases or specific styles without content blocks.

The community appreciates the lack of mandatory cloud guardrails. Safety remains a shared responsibility—users can implement their own filters if needed. Many highlight this openness as a major advantage for experimentation, fine-tuning, and building custom applications.

Commercial use is permitted under the open-source license, removing barriers for independent creators and small studios.

The model encourages community contributions, leading to rapid improvements in workflows, custom nodes, and quantization techniques.

Comparative Battle: Tencent Hunyuan Video vs Alibaba Wan2.1

Hunyuan Video and Wan2.1 represent two leading open-source contenders, each with distinct strengths.

Hunyuan often wins on visual appeal, cinematic styling, and text rendering. Its outputs frequently look more polished and film-like, with strong consistency in lighting and human subjects.

Generation speed after optimizations also favors it in many user reports.

Wan2.1 tends to lead in motion dynamics and physics realism. It handles complex movements, dancing, mechanical actions, and natural element interactions (like flowing hair or fabric) with greater fluidity according to direct comparisons.

Some testers note better anatomical accuracy and texture preservation during intense motion.

Hardware efficiency varies by setup, but both benefit from quantization. Hunyuan 1.5‘s lighter design makes it more approachable for mid-range cards after FP8 conversion.

Wan2.1 shines in scenarios demanding precise physics but can require more careful prompting for style consistency.

Many creators use both models depending on the project: Hunyuan for aesthetic-driven videos and Wan2.1 for high-motion realism. The choice ultimately depends on priorities visual polish versus movement naturalness.

Local Hardware VRAM Requirements

Full-precision Hunyuan Video demands significant resources. The base model often requires 45GB+ VRAM for stable 720p-1080p generation, putting it out of reach for most standard gaming GPUs without heavy optimization.

This gap narrows dramatically with community tools. FP8 quantization and GGUF formats reduce memory footprint substantially.

Many users now run effective workflows on 12-24GB cards, with some achieving basic results on 8-10GB setups through aggressive tiling and lower resolutions.

RTX 4090 (24GB) serves as a sweet spot for comfortable 1080p work. Lower-end cards like RTX 3080 or 4070 need careful configuration, including tile size adjustments and reduced frame counts. System RAM also plays a supporting role for swapping.

The hardware barrier exists but continues to lower as quantization and ComfyUI integrations improve.

Step-by-Step ComfyUI Workflow Integration

ComfyUI provides the most popular way to run Hunyuan Video locally. Setup starts with installing the official HunyuanVideo wrapper nodes or community forks like Kijai’s loaders.

Key steps include:

- Downloading the base model weights and VAE from Hugging Face.

- Placing FP8 or GGUF quantized versions in the appropriate folders for lower VRAM use.

- Loading the Diffusion Model node with fp8_e4m3fn weight dtype.

- Configuring VAE Decode with tiled options (tile size around 192-256 and appropriate overlaps to avoid OOM errors).

- Setting resolutions like 832×480 or 720p for balanced quality and speed.

- Using 8-12 steps for FastVideo variants to maintain quality while speeding up inference.

Prompting works best with detailed, structured descriptions. Including camera directions, lighting, and subject actions improves results.

Negative prompts help avoid common artifacts. Testing shorter clips first allows quick iteration before scaling to longer sequences.

The workflow supports both text-to-video and image-to-video paths. Extensions and custom nodes handle upscaling, frame interpolation, and basic editing.

Current Limitations: What’s Missing in the Current Stack?

Despite strong capabilities, Hunyuan Video has clear gaps. Native audio generation and lip-sync features are absent, requiring external tools for sound integration or dialogue videos.

This limits its use for talking-head content or music videos without post-production work.

Complex multi-character interactions and fast-paced action sequences can produce artifacts or unnatural movements.

Prompt adherence sometimes falters with very detailed or contradictory instructions, leading to fallback behaviors or inconsistent outputs.

Generation length remains capped at shorter clips natively (around 5-10 seconds), though extensions help build longer pieces.

Real-time performance is not yet feasible on consumer hardware. Some users report occasional jitter or lighting shifts in extended generations.

The model performs better with human subjects and simpler physics than with intricate animal movements or highly stylized anime content in certain tests.

FAQs

Is Hunyuan Video completely free to use? Yes. The model weights and code are open-source, allowing free local deployment with no subscription or per-generation fees.

What hardware is needed to run Hunyuan Video locally? Full precision requires 45GB+ VRAM, but optimized FP8 and GGUF versions run on 12-24GB GPUs. Some workflows work on 8-10GB cards with lower resolutions and tiling.

Does Hunyuan Video support image-to-video generation? Yes. It handles both text-to-video and image-to-video tasks effectively within the same framework, with good subject consistency from reference images.

How does Hunyuan Video compare to closed-source tools? It delivers competitive visual quality and motion in many cases while offering full local control and no usage limits, though some premium cloud services may edge it in specific physics or length capabilities.

Can Hunyuan Video generate videos with audio? Native audio generation is not available. Users typically add sound in post-production or combine outputs with separate lip-sync tools.

Is it suitable for commercial projects? Yes. The open-source license permits commercial use, and generated content belongs to the user.