Mochi Diffusion AI is an open-source AI video generation model developed by Genmo.

It specializes in creating high-quality videos from text prompts with strong focus on realistic motion and physics.

The model generates smooth 1080p clips up to 10 seconds long and runs completely locally on your own hardware.

It stands out among open-source tools for its natural movement and physical accuracy.

Top benefit of Mochi Diffusion AI

The biggest advantage is its impressive motion realism and physics understanding.

Videos feel grounded and believable instead of floating or jittery.

This makes it especially useful for dynamic scenes where natural movement matters most.

VRAM requirements

Mochi Diffusion AI is fully open-source.

- 720p generation requires around 12 to 14 GB VRAM.

- For smooth 1080p output, 20 to 24 GB VRAM is recommended.

Lower-end GPUs with 8 to 10 GB can run it but at reduced resolution and slower speeds.

Mochi Diffusion AI Features

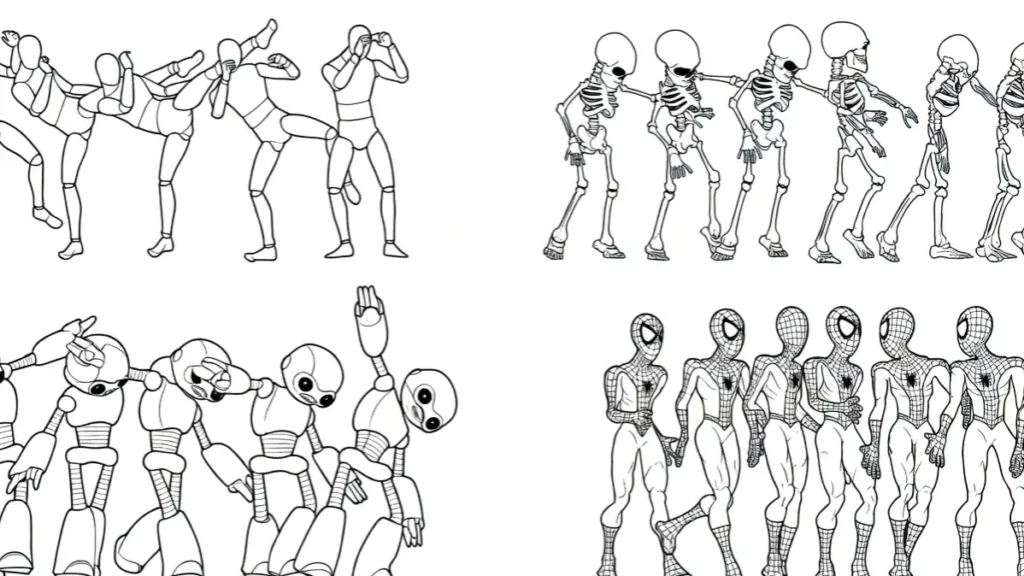

- Realistic motion and physics

Objects and characters move with proper weight and gravity.

Cloth, hair, and water behave naturally in most scenes. - Strong prompt following

It understands detailed descriptions including camera angles and styles.

Complex actions come out cleaner than many other open models. - Clean 1080p output

Videos have good detail, lighting, and color accuracy.

Results look sharp right from the first generation. - Completely local and unlimited

You can generate as many videos as your hardware allows.

No credits, watermarks, or daily limits apply. - Fast experimentation

Generation speed is reasonable on high-end GPUs.

This supports quick testing and iteration of ideas.

Pros

- Excellent motion realism for an open-source model

- Strong physics simulation in dynamic scenes

- Fully free with open weights and no usage restrictions

- Good visual quality at 1080p resolution

- Runs completely offline on your own PC

Cons

- High VRAM requirements for best performance

- Limited to 8-10 second clips currently

- No audio generation is included

- Setup requires technical knowledge and GitHub installation

- Struggles with very complex multi-subject interactions

Mochi Diffusion AI vs alternatives

| Feature | Mochi Diffusion AI | Kling AI 2.6 | Runway Gen-3 | Luma Dream Machine |

|---|---|---|---|---|

| Open-source and Local | Yes | No | No | No |

| Motion Realism | Very Good | Excellent | Very Good | Good |

| Physics Accuracy | Strong | Strong | Average | Average |

| Max Clip Length | 8-10 seconds | 10 seconds | 10-16 seconds | 5-10 seconds |

| Cost | Free | Freemium | Paid | Paid |

| VRAM Needed | 12-24 GB | Cloud only | Cloud only | Cloud only |

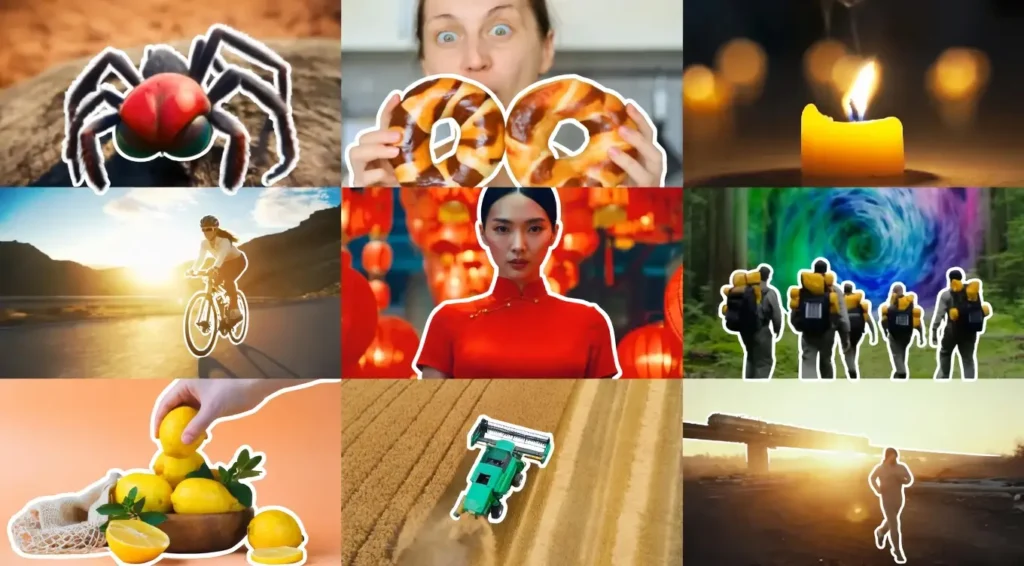

Quick pics

- A red sports car drifting around a mountain corner with realistic tire smoke

- A fluffy cat jumping onto a windowsill with natural fur and soft landing

- A samurai running through a rainy street showing accurate water splashes

My experience with Mochi Diffusion AI

I spent several days generating motion-heavy videos and testing physics behavior.

The natural weight and movement of objects impressed me most.

Setup took some time and effort but once running locally the freedom of unlimited generations felt refreshing.

It still has limitations with longer clips and crowded scenes but delivers strong results for single-subject dynamic shots.

Rating

Persistence and physics: 9.2

Ease of setup: 6.0

Visual quality: 8.1

Innovation: 8.7

Value (free): 10

Final thoughts

Mochi Diffusion AI is currently one of the strongest open-source video generation models.

Its realistic motion and physics make it stand out especially for users who want to run everything locally.

While it still needs work on clip length and complex scenes the fact that it is completely free and open-source makes it a solid choice for developers and creators who value freedom and offline capability.

FAQs

Is Mochi Diffusion AI completely free?

Yes the model weights and code are fully open-source with no usage limits.

What GPU do I need to run Mochi Diffusion AI?

At least 12 GB VRAM for usable 720p. 20 to 24 GB is recommended for smooth 1080p.

Does Mochi Diffusion AI generate audio?

No it currently produces silent video only.

How long can videos be with Mochi Diffusion AI?

Maximum length is currently 8 to 10 seconds per generation.

Is Mochi Diffusion AI easy to install?

It requires some technical knowledge and terminal commands for setup.

Can I use Mochi Diffusion AI for commercial projects?

Yes because it uses a permissive open-source license commercial use is allowed.

How does Mochi Diffusion AI compare to paid tools?

It offers better motion realism than many free alternatives and competes well with paid cloud models in physics though it lacks their ease of use.

Where can I download Mochi Diffusion AI?

Official weights and code are available on the Genmo GitHub repository and Hugging Face.